Leveraging psychology to test code

Unit tests. Hands up who's thought about creating unit tests? All of you? Ok then, who's actually got a project with large-scale test coverage? That's what I thought.

The idea of unit tests and their benefits are drummed into developers on every blog, at every conference, on most stack-overflow posts ... With most projects though, they remain either stubbornly missing or with a pathetically low coverage.

It doesn't matter about the size of your company or the experience of your team, when a crunch happens they're the first thing to get added to a backlog of technical debt and once there, gather dust like so many running machines in homes.

While unit tests are not the be-all and end-all of testing your code, they are a amazingly useful resource for catching bugs before your code goes live and showing code up as being overly complex and as such something we should be striving toward.

One of the less-talked about benefits of unit tests is they provide insight into what the developer was thinking at the time the code was written. While some devs may leave useful comments, they're not exactly what developers are known for, so having a "how-to use" guide in the form of unit tests is handy.

In this blog post, I'm going to cover a system I've used for adding tests to a project I worked on, which a colleague defined as "leveraging psychology in developers". This project was an MVP experiment which, as these things will do, quickly turned into a full-blown platform. The same techniques will work with a legacy project, whether or not it has some level of existing tests.

A word of warning though, this post is about how to help your team create tests. It won't completely help you if your code is overly complex and needs massive refactoring, but it may help a little. Read on anyway and see what you think.

Headology

One of the main problems I found with starting to write unit tests on a large project is the depressing nature of it all. You spend hours writing some tests that aren't too brittle but thoroughly check your logic, and then run a coverage report and realise you've covered 0.43% of your project.

Most projects, without some tweaking of the settings, will never get to 100%, which is a shame because that's exactly what we want. When you have 100% code coverage, the only way is down. If someone has missed something out of testing, you and the entire team will notice and someone will say something.

The idea is similar to the Broken Windows theory where in if you have a run down area with broken windows, no one cares about a few more. If there's litter around, then people are more likely to drop litter, etc.

Unit tests can be a pain to write well so if some are missing then developers, who are normally intrinsically lazy, can and will ignore writing new ones or fixing broken ones, which leads to a drop in coverage, and less people caring about it, and a cycle that eventually renders your unit tests defunct.

A little goes a long way

If it's going to help us so much, how do we get to 100%? Well, we cheat. Only a little, but we still cheat. First, if it's a new or a small project, think about what you actually want to be testing. You use unit tests to check your logic, so do you really want to be checking your controllers, which should hopefully be as logic-free as possible?

If it's a legacy project then also think about what you want to test, but also think about what has working tests now and what you want to test first.

Now you've done that, use your unit test runner (in the case of these examples I've used PHP Unit) and whitelist everything that has working tests. The following shows an example of a phpunit.xml file from my project.

<?xml version="1.0" encoding="UTF-8"?>

<!-- https://phpunit.de/manual/current/en/appendixes.configuration.html -->

<phpunit xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:noNamespaceSchemaLocation="http://schema.phpunit.de/4.8/phpunit.xsd"

backupGlobals="false"

colors="true"

bootstrap="app/autoload.php"

>

<php>

<ini name="error_reporting" value="-1" />

<server name="KERNEL_DIR" value="app/" />

</php>

<testsuites>

<testsuite name="Project Test Suite">

<directory>tests</directory>

</testsuite>

</testsuites>

<filter>

<whitelist>

<directory>src</directory>

<exclude>

<directory>src/*Bundle/Resources</directory>

<directory>src/*/*Bundle/Resources</directory>

<directory>src/*/Bundle/*Bundle/Resources</directory>

<!-- Ignores folders we don't want to test -->

<directory>src/Eagle/APIBundle/Command</directory>

<directory>src/Eagle/APIBundle/Controller</directory>

<directory>src/Eagle/APIBundle/Entities</directory>

<directory>src/Eagle/APIBundle/Repositories</directory>

</exclude>

</whitelist>

</filter>

</phpunit>Note the above ignores all resources directories, as well as commands, controllers, entities, and repositories. This is because in this project, the entities and repositories are for a future phase so we don't want to test them yet.

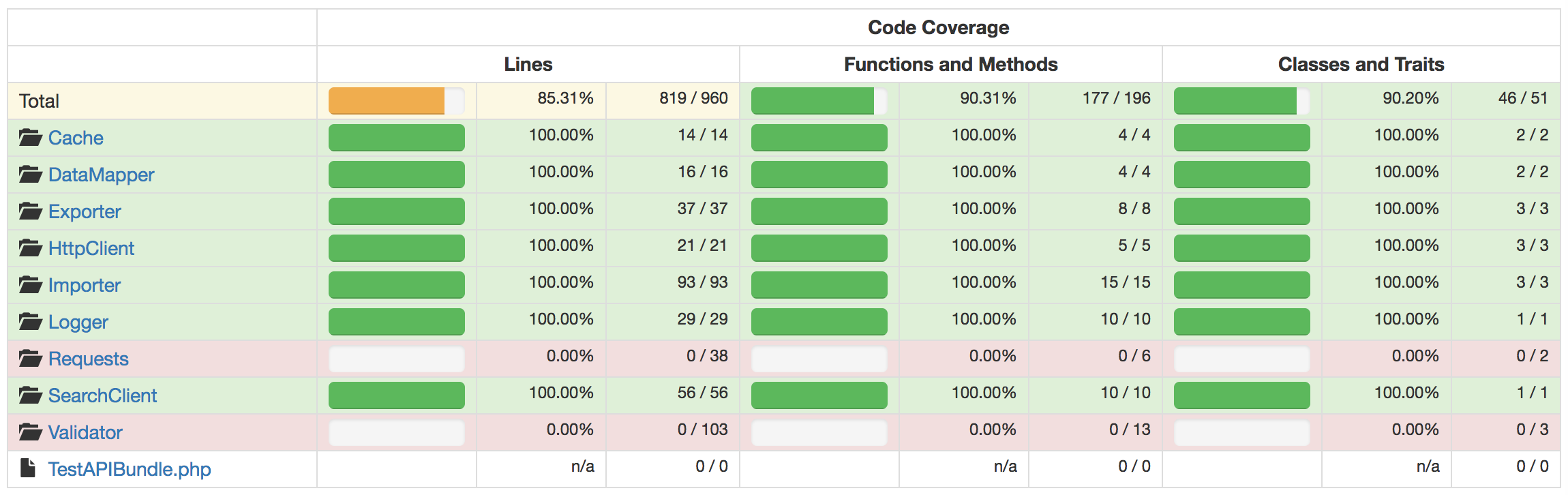

Now check your code coverage. What's it at, 80%? 90%? Spend the next hour or two looking at the tests you've got and then write the missing ones. Use code coverage reports to see what logic is missing a test and write a test to cover those.

vendor/bin/phpunit --coverage-html reports

When I come to writing tests, I focus on a single, logical path through the method such as following all "if" statements that are true (the number of these paths through a given method is called it's NPath complexity). Then I give each test a long name that explains what's going on.

After covering that first path, I'll then create tests that complete the other paths. Sometimes these tests can be smaller than the first one as sometimes you just want to be testing a specific piece of logic in that method.

Using PHP 7 you can create anonymous classes for test doubles, so I make heavy use of these as my tests become self-contained, instead of relying on external classes for my spies and fakes.

An example of a test for my project can be seen below. It uses the setUp() method to create an anonymous class that acts as a spy, storing whenever something calls the set() method for a config.

namespace Test\APIBundle\Tests\ExternalConfig\RequestConfig;

use Test\APIBundle\ExternalConfig\AbstractConfig;

use Test\APIBundle\ExternalConfig\ConfigFields;

use Test\APIBundle\ExternalConfig\RequestConfig\TestRequestConfigDecorator;

class TestRequestConfigDecoratorTest extends \PHPUnit_Framework_TestCase

{

private $config;

public function setUp()

{

$this->config = new class extends AbstractConfig {

public $logs = [];

public function set(string $option, $value):void {

$this->logs[] = ['method' => 'set', 'option' => $option, 'value' => $value];

parent::set($option, $value);}

public function getUniqueKey():string {

return '';

}

};

}

public function testRADAIsSetWhenPassed()

{

$testParams = [ConfigFields::RADA => 'test_rada'];

new TestRequestConfigDecorator($this->config, $testParams);

$this->assertEquals('set', $this->config->logs[0]['method']);

$this->assertEquals('request_rada', $this->config->logs[0]['option']);

$this->assertEquals('test_rada', $this->config->logs[0]['value']);

}

public function testRADAIsSetToNullWhenNotPassed()

{

new TestRequestConfigDecorator($this->config, []);

$this->assertEquals('set', $this->config->logs[0]['method']);

$this->assertEquals('request_rada', $this->config->logs[0]['option']);

$this->assertEquals(null, $this->config->logs[0]['value']);

}

}Check, check, and check again

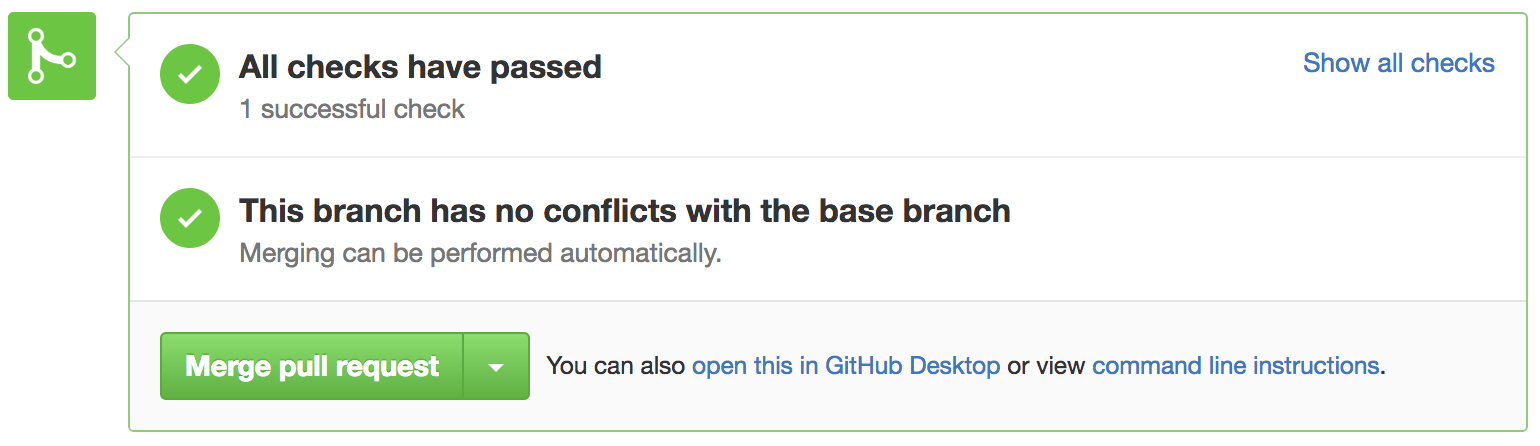

We've now got 100% code coverage, even if it's only for specific sections of the code, so it's time to make sure we keep it. Make it part of the team's code-review practice to double-check that every pull request has tests in it.

You can go beyond code-reviews as well, but it's important to recognise that the code reviews are needed to make sure the tests aren't just gibberish and are actually testing something useful (remember before when I described developers as intrinsically lazy - any way to game the system will be used).

The helpful driver behind this though is that everyone benefits from 100% code coverage, so everyone should be engaged enough to keep it that way.

To hammer home how important tests are, my project has gone that one step further. Our GitHub pull requests trigger a build in Jenkins that automatically runs all tests, stores the code coverage graphs, and, for good measure, runs a PHP code sniffer to check our coding standards.

This automation also prevents a pull request from being merged unless there is still 100% code coverage. If you view good test coverage helping prevent bugs as the carrot, then this Jenkins task and block on Github PRs is definitely the stick.

More, more, more

Hopefully you can use the above advice to keep 100% test coverage on parts of your projects. The last step is expanding what's covered by the whitelist. The answer to that is go slowly and block out time to work on this techincal debt. Make sure any new code is added to the whitelist as well, as it's easy to forget.

If you've any comments, then please get in touch.